This study presents the security threat model of the Modular Articulated Robot Arm (MARA) from Acutronic Robotics and identifies security threats while provides guidance to address or mitigate them. Developed in the context of an industrial application, this robotic case study takes place on an arbitrary plant characterized by an environment where ROS 2 robots collaborate and work together with professionals. In particular, the application considered comprises a MARA modular robot operating performing a pick & place activity.

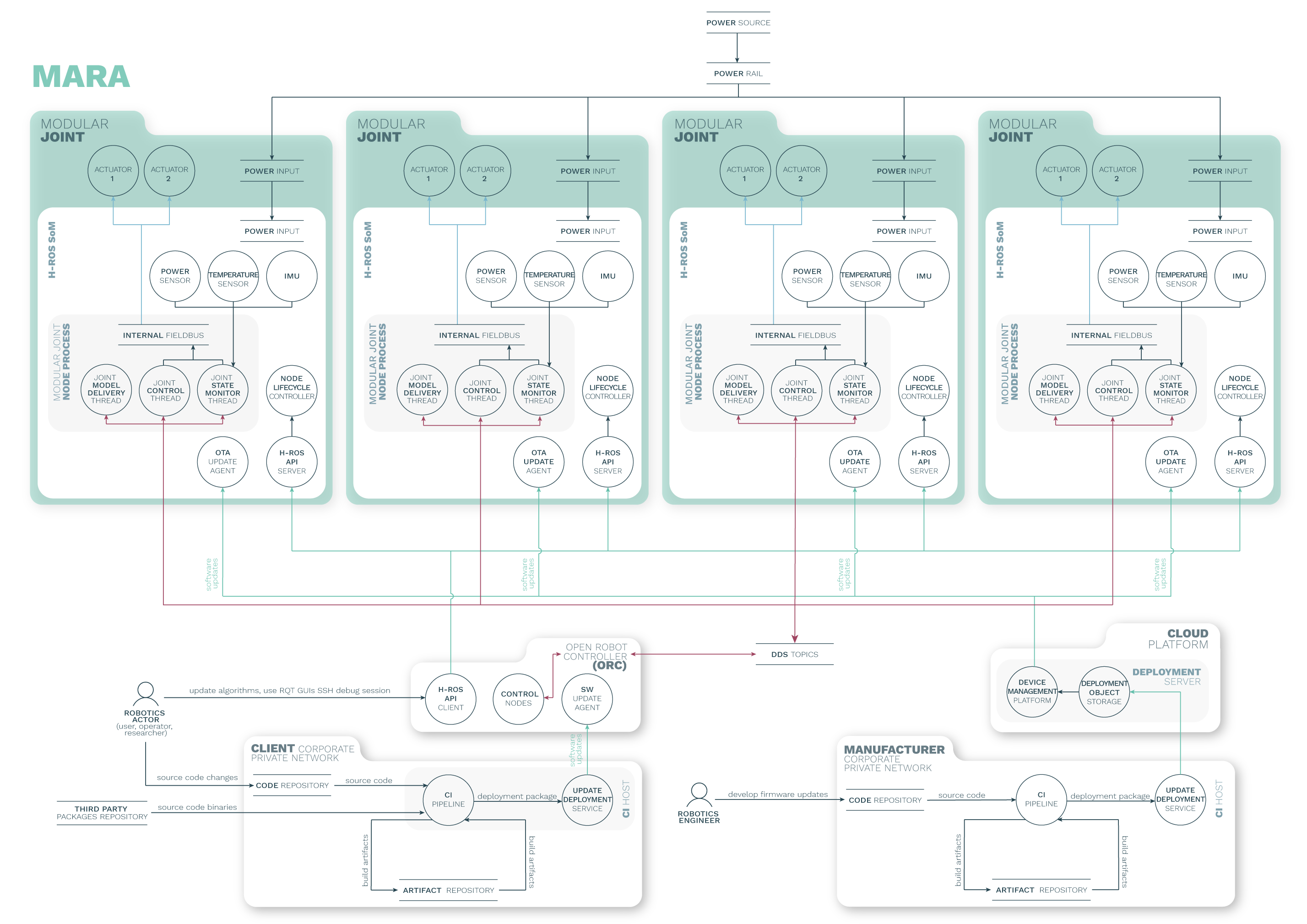

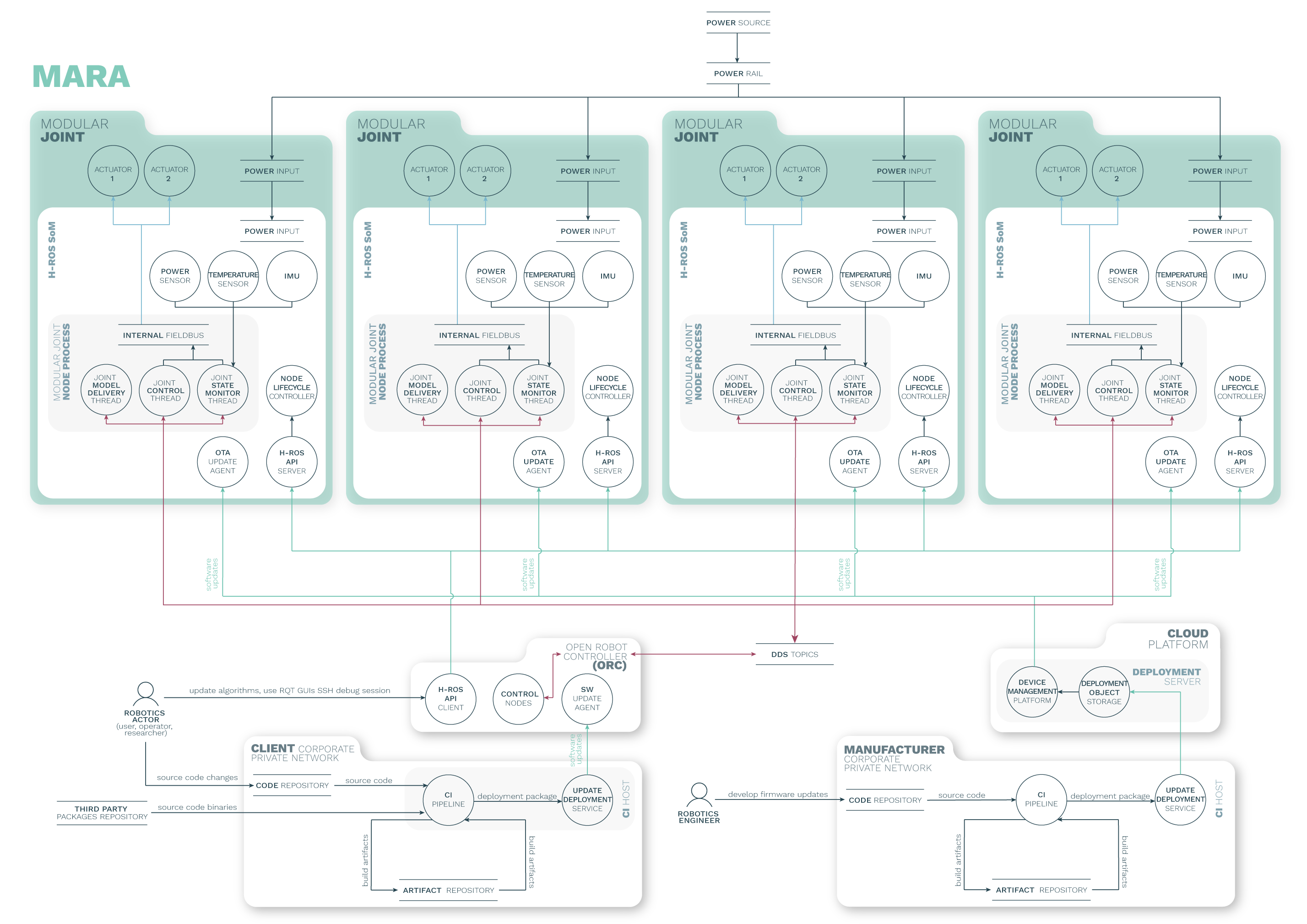

MARA is a 6 Degrees of Freedom (6DoF) modular and collaborative robotic arm with 3 kg of payload and repeatibility below 0.1 mm. The robot can reach angular speeds up to 90 degree/second and has a reach of 656 mm. The robot contains a variety of sensors on each joint and can be controlled from any industrial controller that supports ROS 2 and uses the HRIM information model. Each of the modules contains the H-ROS communication bus for robots enabled by the H-ROS SoM, which delivers real-time, security and safety capabilities for ROS 2 at the module level.

By exposing the robot internal network across each module, MARA can be physically extended in a seamless manner while maintaining real-time, security and safety characteristics. This however, brings new challenges, especially in terms of security and safety.

Assets represent any user, resource (e.g. disk space), or property (e.g. physical safety of users) of the system that should be defended against attackers. Properties of assets can be related to achieving the business goals of the robot. For example, sensor data is a resource/asset of the system and the privacy of that data is a system property and a business goal.

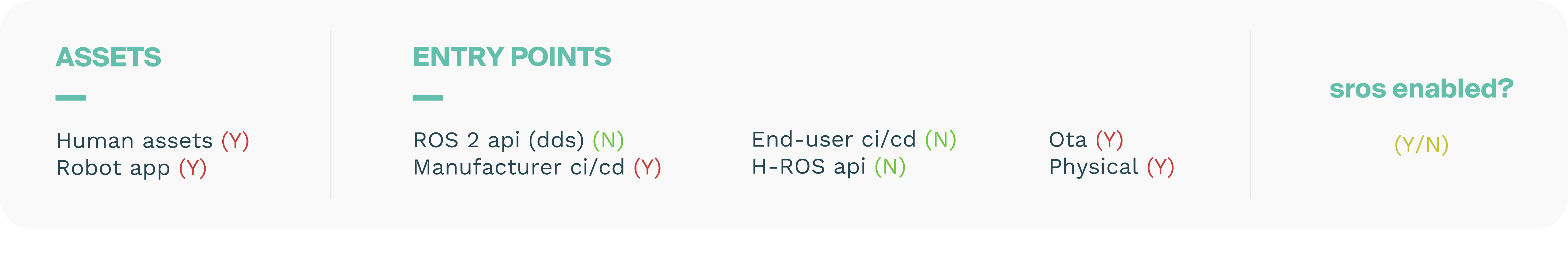

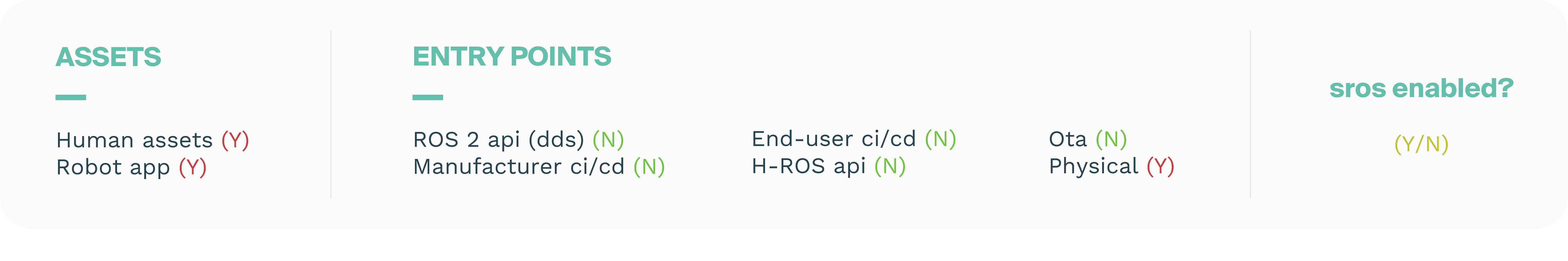

A threat model should detail the different aspects of the robot in terms of Hardware, Software and Networking. The external actors and Data assets are also included but often described independently. These aspects are captured by describing the assets within the use case. The following ones are included in the complete report:

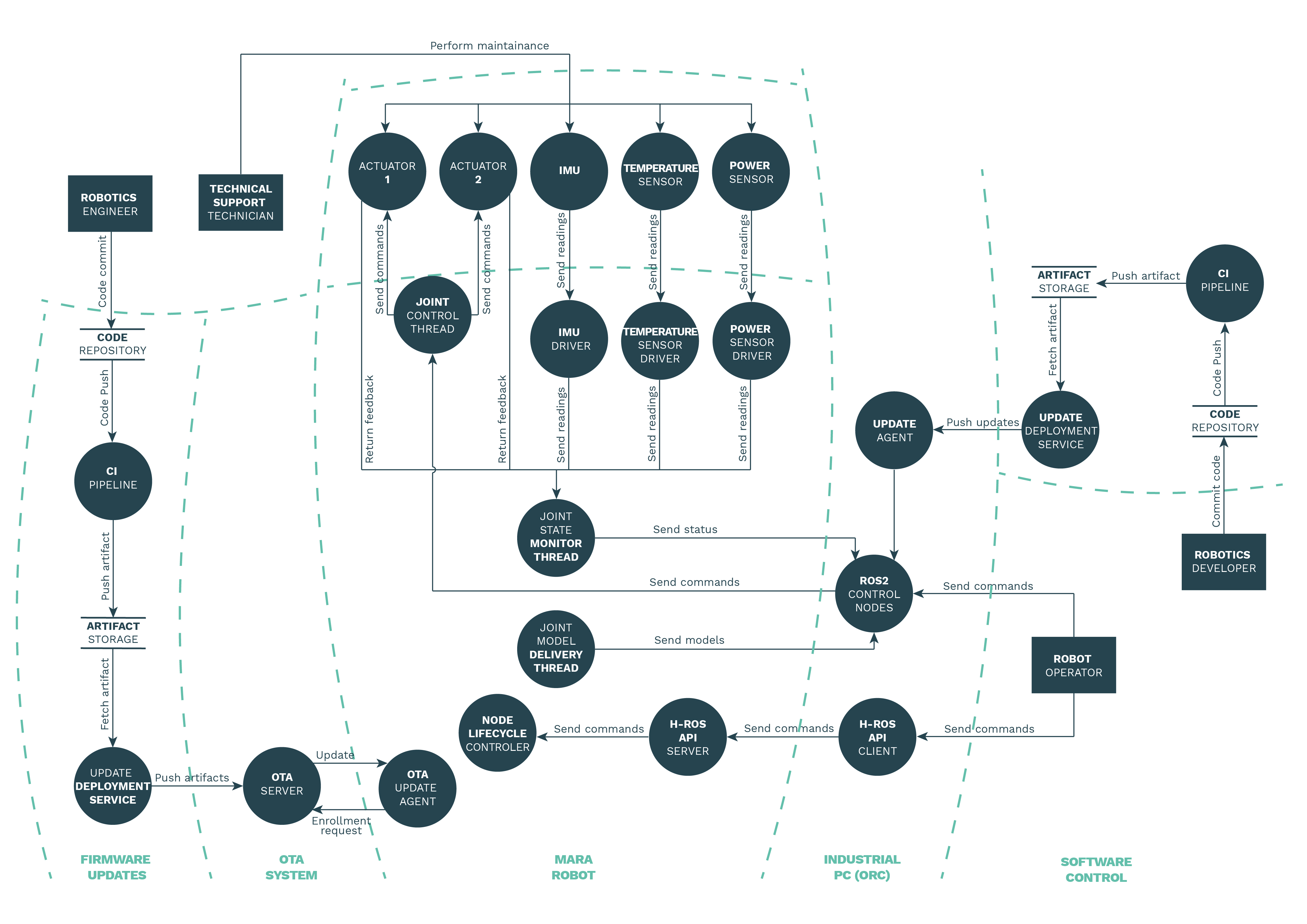

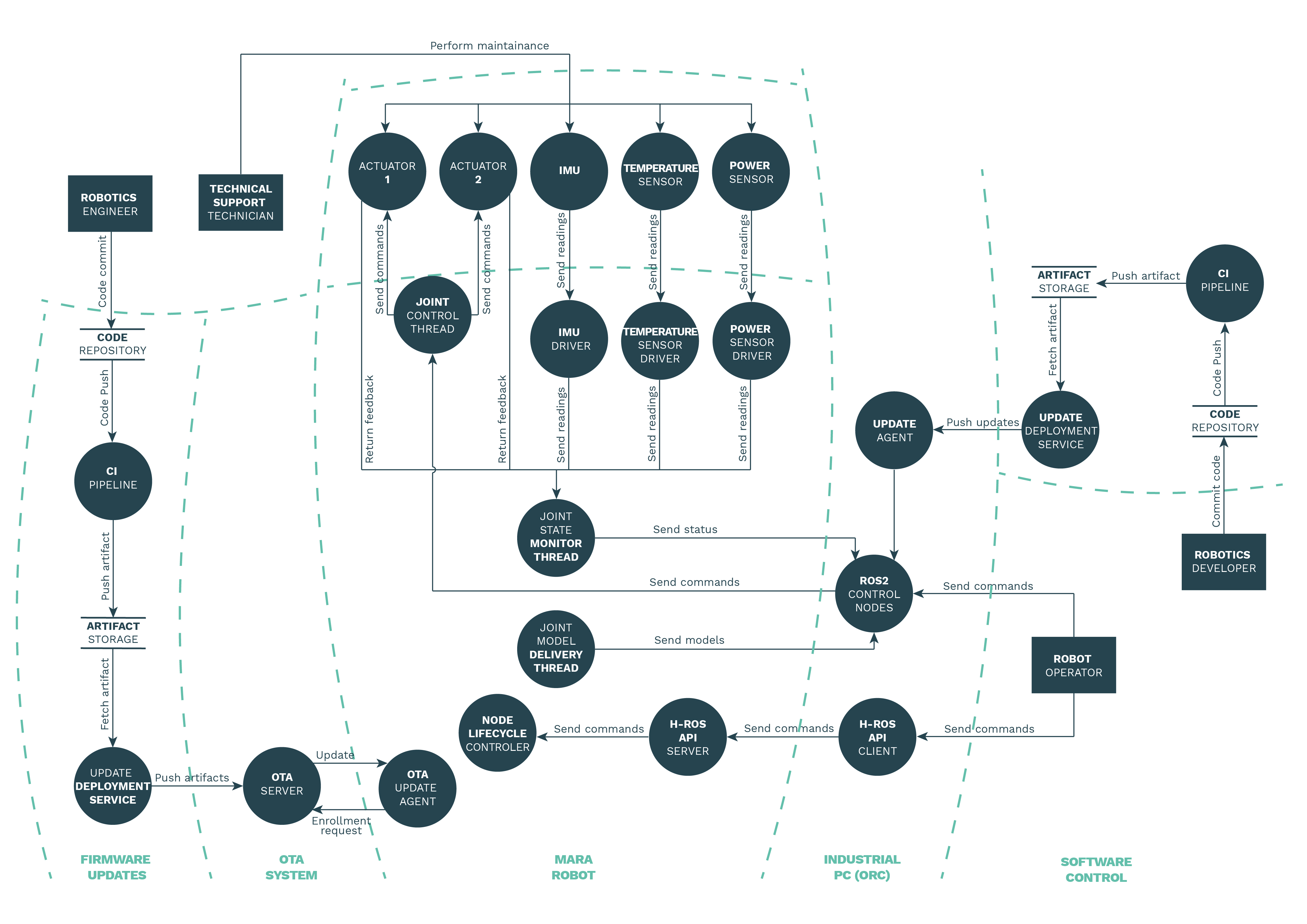

The architecture dataflow diagram displays and interrelates the different components, actors and assets that play a relevant role on the system. The diagram also shows the interaction between components and the communication channels (both real and virtual ones) used to exchange data from one to another.

A trust boundary is anyplace where various principals come together, that is, where entities with different privileges interact. An attack surface is a trust boundary and a direction from which an attacker (often captured with another trust boundary) could launch an attack. A system that exposes lots of interfaces to the outside generally presents more complex trust boundary diagrams and larger attack surfaces.

❯ Restart the robot ❯ Restart the ROS graph ❯ Physically interact with the robot ❯ Receive software updates from OTA ❯ Check for updates ❯ Configure ORC control functionalities

❯ Start the robot ❯ Control the robot ❯ Work alongside the robot

❯ Start the robot ❯ Control the robot ❯ Configure the robot ❯ Include the robot into the industrial network ❯ Check for updates ❯ Configure and interact with the ORC

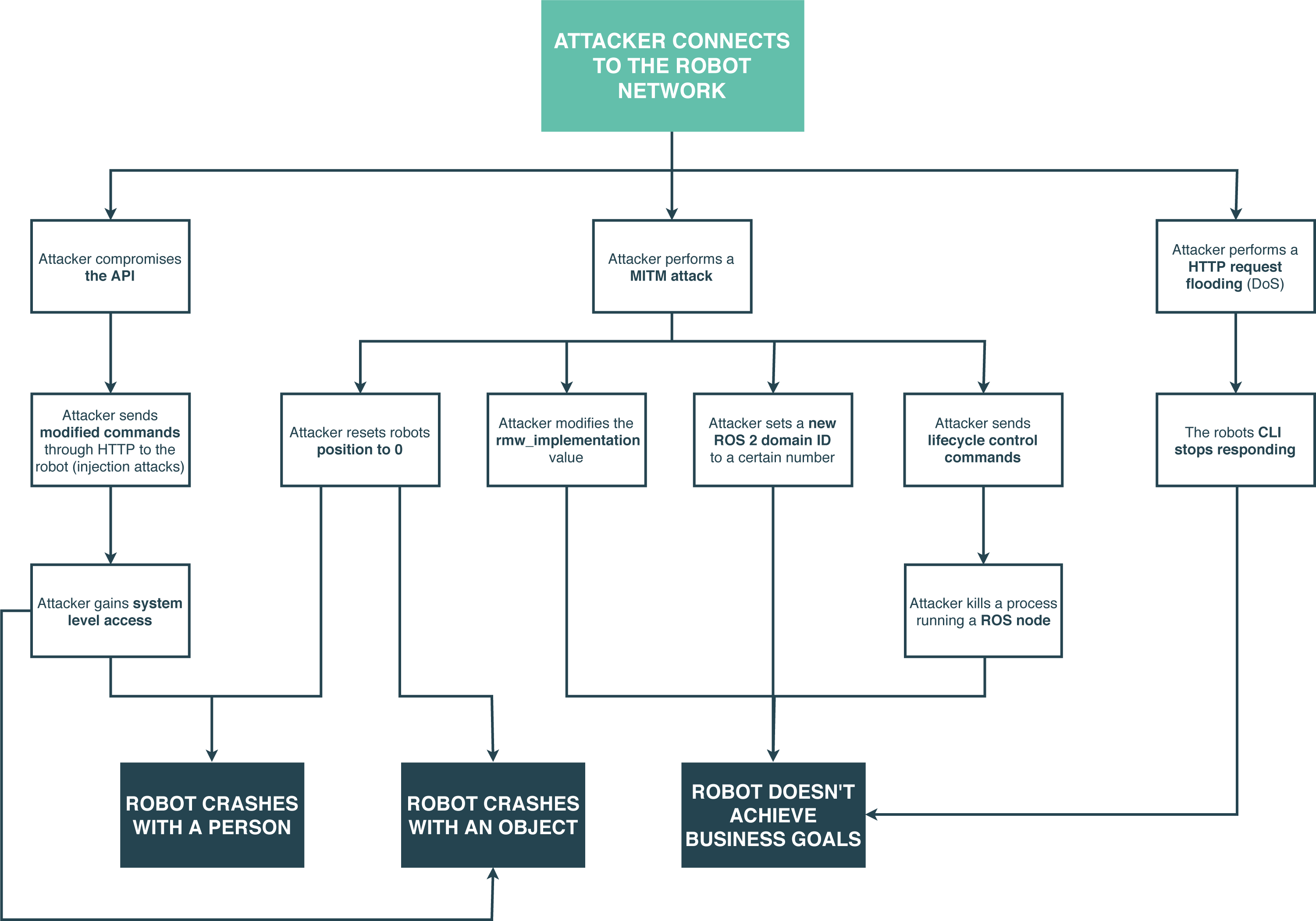

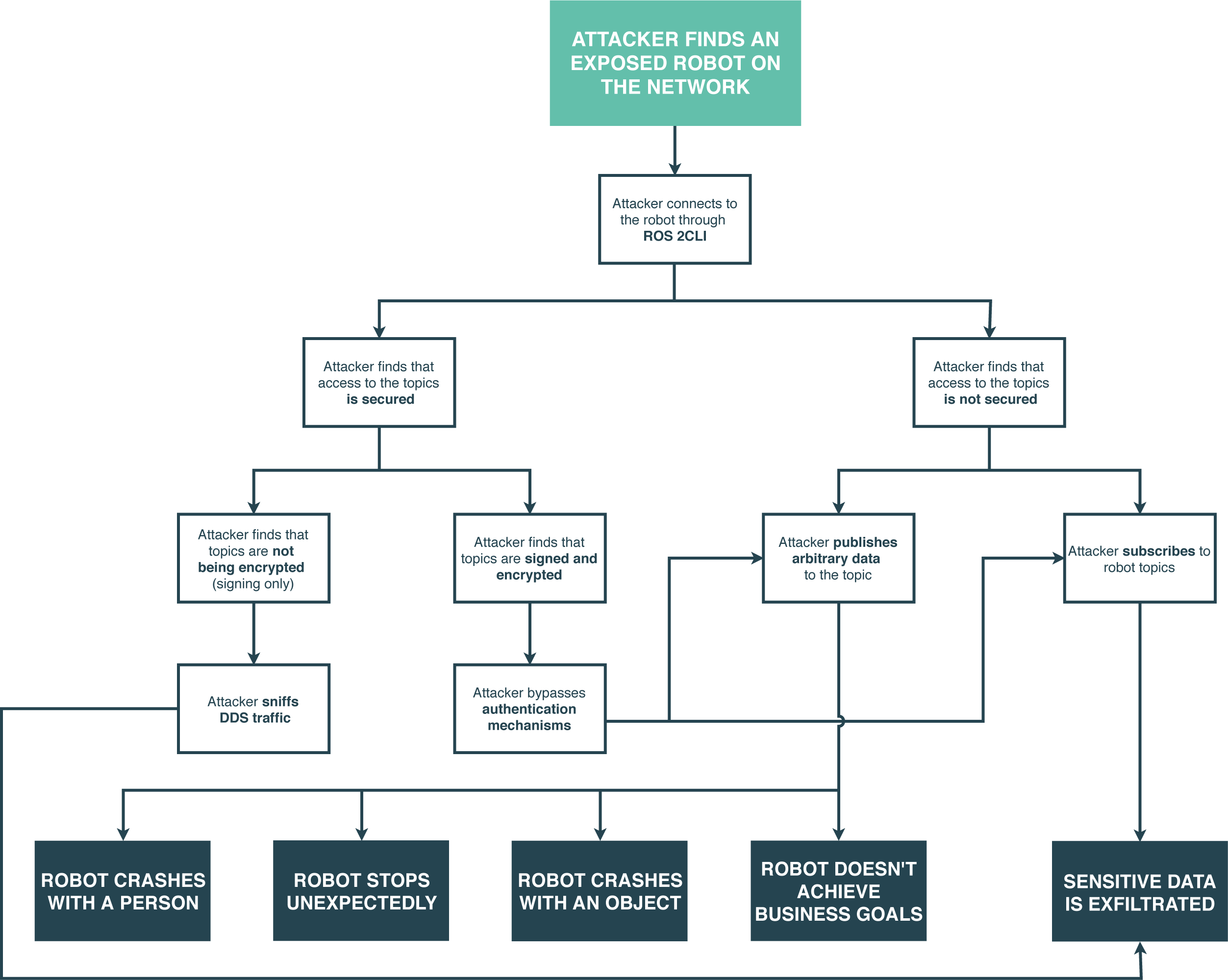

A threat is considered as the risk that one may exploit a vulnerability to breach security and therefore cause possible harm. The objective of this robot threat analysis is to identify the threats and correspondingly, the attack vectors for the case study. An attack vector is a path that an attacker could follow to perform an attack on the system.

Several attack vectors are identified and classified depending on the risk and services involved. Risks are detailed and evaluated using threat modeling methodologies, namely STRIDE and DREAD. We present below a few selected threats and coherent attacks.

An attacker compromises the real-time clock to disrupt the kernel real-time scheduling guarantees. A possible vector includes the deployment of a modified kernel without optimized real-time capabilities enabled.

Enable verified boot on Uboot to prevent booting altered kernels. Use built in TPM to store firmware public keys and define an RoT.

An attacker spoofs a robot sensor (by e.g. replacing the sensor itself or manipulating the bus).

Add noise or out-of-bounds reading detection mechanism on the robot, causing to discard the readings or raise an alert to the user. Add detection of sensor disconnections.

Beyond listing the threats and related attacks, we can also further dive in the assessment and employ other mechanisms, attack trees or attack libraries are among the options. Attack trees are a method to find threats, a way to organize threats found with other building blocks, or both. Attack trees provide a formal, methodological way of describing the security of systems, based on varying attack paths. Basically, attacks are represented against a system in a tree-like structure. Below a few examples are presented:

Beyond the threat model, our team also performed a pentesting activity for this case study and robot. Vulnerabilities found were rated on a severity scale according to the guidelines stated in the Common Vulnerability Scoring System (CVSS) and the Robot Vulnerability Scoring System (RVSS), resulting in a score between 0 and 10. RVSS follows the same principles as the Common Vulnerability Scoring System (CVSS), yet adding aspects related to robotics systems that are required to capture the complexity of robot vulnerabilities. Several vulnerabilities were found resulting in the following classification by severity: