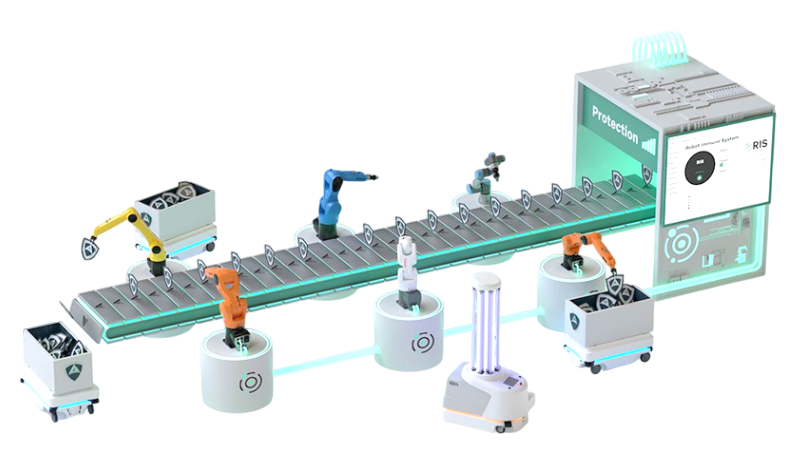

Cybersecurity

services for robotics

Security is not a product, it is a process. Our cyber security services offer end-to-end offensive security research, security certification and compliance for robots and robot components. We help you find vulnerabilities and flaws in your robots before others do.